AI Agents Are Entering the Trough of Disillusionment — What That Means for Your Business

The hype cycle has a pattern, and AI agents are following it. After two years of vendors promising autonomous agents that would transform every business function, the reality is landing: 95% of organizations report zero return on investment in their AI implementations, according to MIT research. McKinsey finds that among companies under $100 million in revenue, only 29% have achieved any meaningful scale with AI agents at all.

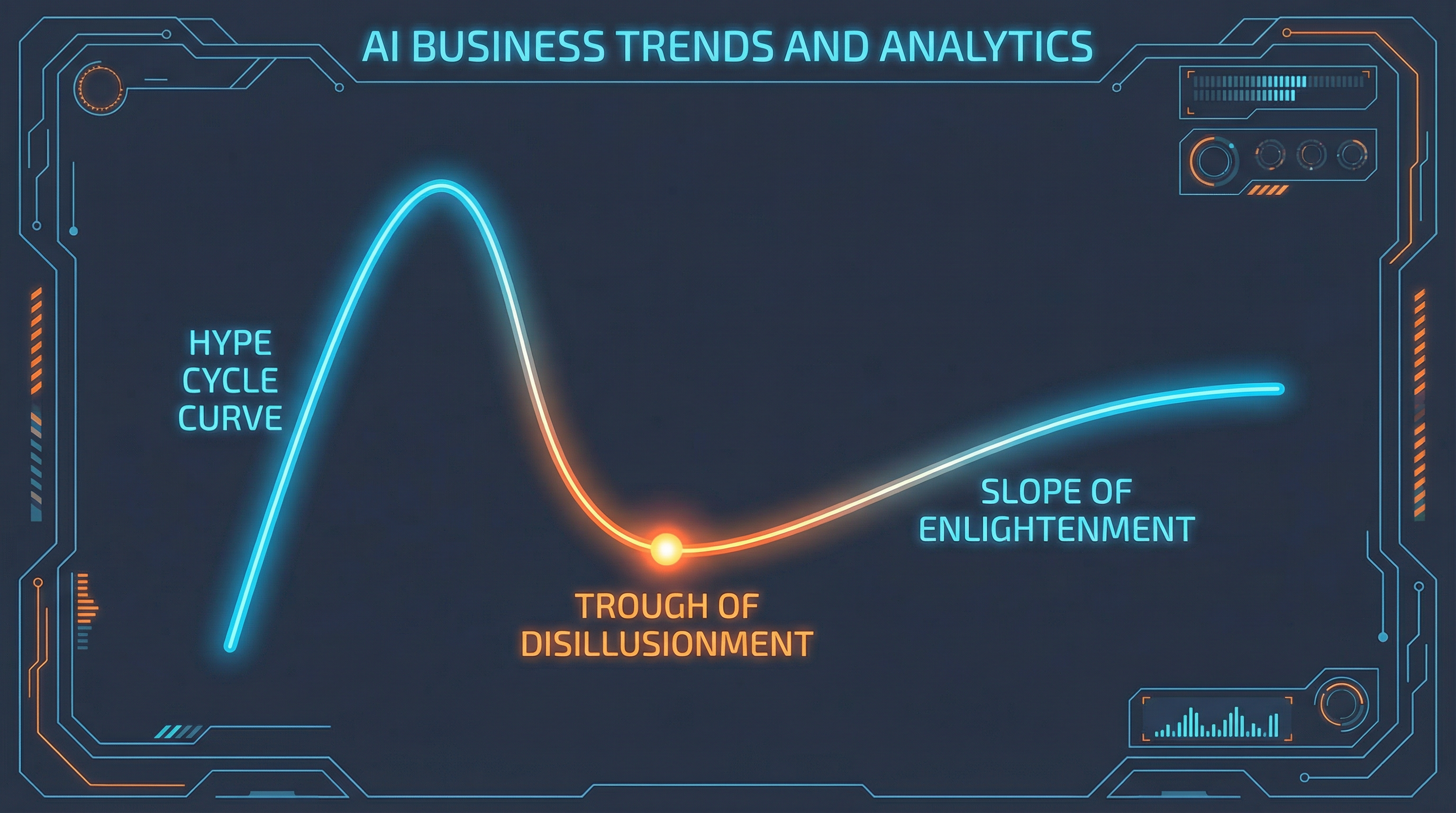

Gartner's latest Hype Cycle for AI places AI agents at the Peak of Inflated Expectations — and analysts are already warning that the descent into the Trough of Disillusionment is coming. The question for businesses that have invested in agent technology, or are planning to, is not whether this is happening. It's what to do with that information.

What the Trough of Disillusionment Actually Means

The Gartner Hype Cycle describes the trough as the phase where "interest wanes as experiments and implementations fail to deliver." Technologies that reach this stage aren't necessarily bad — many of the most foundational enterprise technologies in use today passed through it. What the trough reveals is the gap between what a technology promises in demos and what it delivers in production.

For AI agents specifically, Gartner analyst Arun Chandrasekaran put it directly: "We're in the super early stage of agents. The technology is not yet ready for most high-stakes business applications." His colleague Birgi Tamersoy explained the underlying issue: "You cannot automate something that you don't trust, and many of these AI agents are LLM-based right now, which means that their brains are generative AI models, and there is an uncertainty and reliability concern there."

That's the core problem. LLM-based agents are probabilistic systems. They're not deterministic. In controlled demos, they appear capable of almost anything. In production, where edge cases multiply and error costs are real, their failure modes are unpredictable enough that many organizations have pulled back.

Why Agents Failed to Deliver at Scale

Understanding why agents are disappointing in practice is more useful than the failure statistic alone.

The Error Compounding Problem

A single AI agent making decisions with 90% accuracy sounds reasonable. A multi-agent workflow where three agents each make decisions with 90% accuracy produces a combined accuracy of 73% — and that assumes errors don't cascade. In practice, a wrong decision in step one of a multi-step agent workflow often creates worse inputs for step two, compounding rather than stabilizing. Gartner's own analysis notes that "multi-agentic workflows create a compounded risk of hallucinations."

For business processes where errors have meaningful consequences — customer-facing decisions, financial operations, compliance-sensitive workflows — this compounding effect is disqualifying at current reliability levels.

The Trust Gap

Automation only works when humans trust the system enough to not constantly override it. Organizations that deployed AI agents quickly discovered that their teams did not trust agent outputs without verification — which often meant agents created more work, not less. Users were checking every output. Managers were reviewing every decision. The efficiency gains evaporated in human oversight costs.

This is not a minor implementation problem. It reflects a fundamental mismatch between the current reliability of LLM-based agents and the reliability threshold required for genuine autonomous operation.

Integration With Legacy Systems

Most enterprise environments were not built for AI agents. Connecting an agent to a patchwork of legacy databases, ERP systems, and decades-old APIs requires significant integration work that vendor demos never show. Carnegie Mellon research found that agents make too many mistakes in complex, real-world environments for businesses to rely on them for processes involving significant stakes — and real-world environments are almost always more complex than controlled evaluations.

The Security Surface Problem

AI agent security represents a genuinely new threat category. Agents with access to systems, databases, and external APIs create attack surfaces that traditional security tools weren't designed for. Prompt injection attacks — where malicious content embedded in data manipulates agent behavior — have no clean analog in classical security thinking. Organizations that moved fast on agent deployment often did so before the security implications were fully understood.

What's Still Working: Where Agents Deliver Real Value

The trough of disillusionment is not a verdict against agent technology. It's a correction of over-inflated expectations toward more realistic ones. Agents are delivering measurable value in specific, well-scoped deployments.

Narrow, High-Volume, Low-Stakes Tasks

Agents reliably handle tasks that are repetitive, well-defined, and where individual errors are recoverable. Document classification, initial customer query routing, data extraction and formatting, and internal knowledge retrieval are all areas where agent deployments are showing real productivity gains.

The pattern in successful deployments is specificity. A general-purpose agent that "handles customer service" underperforms. An agent that classifies incoming support tickets into predefined categories and routes them to the correct queue consistently delivers.

Developer and Technical Workflows

AI coding agents have demonstrated measurable productivity improvements in software development — GitHub's data shows 43 million pull requests merged monthly in 2025, a 23% year-over-year increase, with AI agents contributing meaningfully to that acceleration. Technical workflows, where outputs can be verified by execution (code either runs or it doesn't), suit the current reliability profile of agents better than open-ended business decision tasks.

Augmentation, Not Autonomy

The deployments that consistently deliver ROI position agents as tools that surface information, draft outputs, and flag decisions for human review — rather than autonomous actors that complete workflows end-to-end. This is a fundamentally different use case than what most agent vendors were selling in 2024, and it's a more honest one.

How to Be in the 5% That Makes It Work

The organizations achieving real returns from AI agent investment share a set of characteristics that are learnable.

1. Start With the Constraint, Not the Capability

The failure mode in most agent deployments was capability-led: "Look what this agent can do — now let's find a use case." The 5% inverts that: "Here is a specific bottleneck in our operations — can an agent solve it reliably enough to justify the implementation cost?"

This shifts the evaluation criteria from demo impressiveness to production reliability in a specific, bounded context.

2. Define Reliability Requirements Before Deployment

Before an agent goes into production, define the minimum acceptable reliability rate for that specific task. For a low-stakes document classifier, 85% accuracy might be sufficient. For a system that influences customer credit decisions, 99.9% might be the threshold — and if agents can't reach it, they shouldn't be deployed there.

Most failed agent deployments skipped this step.

3. Build in Human Oversight Explicitly — Then Remove It Gradually

Rather than deploying agents for autonomous operation from day one, structure deployments with explicit human review at critical decision points. Measure where agents perform reliably and where they don't. Expand agent autonomy in areas where reliability is demonstrated; maintain human oversight where it isn't.

This approach takes longer. It also produces deployments that actually work.

4. Treat Agent Infrastructure Seriously

Agents that operate in production require robust infrastructure: memory management, tool orchestration, observability, error handling, and security controls. Organizations that tried to run agents on improvised infrastructure discovered this quickly. The Model Context Protocol and emerging agent infrastructure standards are worth understanding before building.

5. Measure Business Outcomes, Not Agent Activity

The metric that separates successful agent deployments from failed ones is business outcome measurement. Not "how many tasks did the agent complete" — that's activity. Not "how fast did it run" — that's efficiency without context. The question is: what changed in the business because of the agent, and was that change worth the investment?

What Comes After the Trough

The trough of disillusionment is not where technologies go to die. It's where they get built into something real. Gartner's two-to-five year timeline for AI agents to reach the Slope of Enlightenment is roughly consistent with where the foundational work needs to happen: reliability improvements in underlying models, better evaluation frameworks, more mature security tooling, and organizational experience with what agents actually do well.

The businesses that will lead on the other side of this cycle are the ones investing now in the discipline of agent deployment — not the hype, not the demos, but the infrastructure, governance, and use-case specificity that turns AI agents from interesting experiments into production systems that deliver.

That's what the 5% are building. The window to join them is still open.

Internal linking opportunities: Agentic AI · AI Agent Security · AI Agent Infrastructure · Model Context Protocol · AI Bubble 2026

Schema recommendation: Article schema + FAQ schema. Strong "People Also Ask" targets: "Are AI agents worth it?", "Why are AI agents failing?", "What is the trough of disillusionment in AI?"

Suggested featured image concept: A Gartner-style hype curve graphic (dark aesthetic) with a glowing dot descending from the Peak into the Trough, labeled "AI Agents — 2026." Right side shows a small upward slope with "Where 5% succeed" callout. Stats bar below: 95% zero ROI · 29% at scale · $2.5T 2026 AI spend.