What Is a Vector Database? The AI Infrastructure Layer Every Business Needs

If you've ever wondered why your AI chatbot keeps giving generic answers despite having access to your company's documents, the problem almost certainly isn't the language model. It's what sits between your data and the model — or more precisely, what's missing. Vector databases are the infrastructure layer that gives AI systems the ability to search by meaning, not just by keyword, and without them, most enterprise AI use cases simply don't work at the scale businesses need.

By 2026, more than 30% of enterprises are expected to adopt vector databases to enrich their AI models with relevant business data. Understanding what they are, how they work, and when you need one is no longer optional knowledge for technology leaders.

What a Vector Database Actually Is

A traditional database stores information in rows and columns. You query it with exact matches — find the customer with ID 12345, or pull all transactions from March. It's precise, fast, and completely unsuitable for the way AI models understand information.

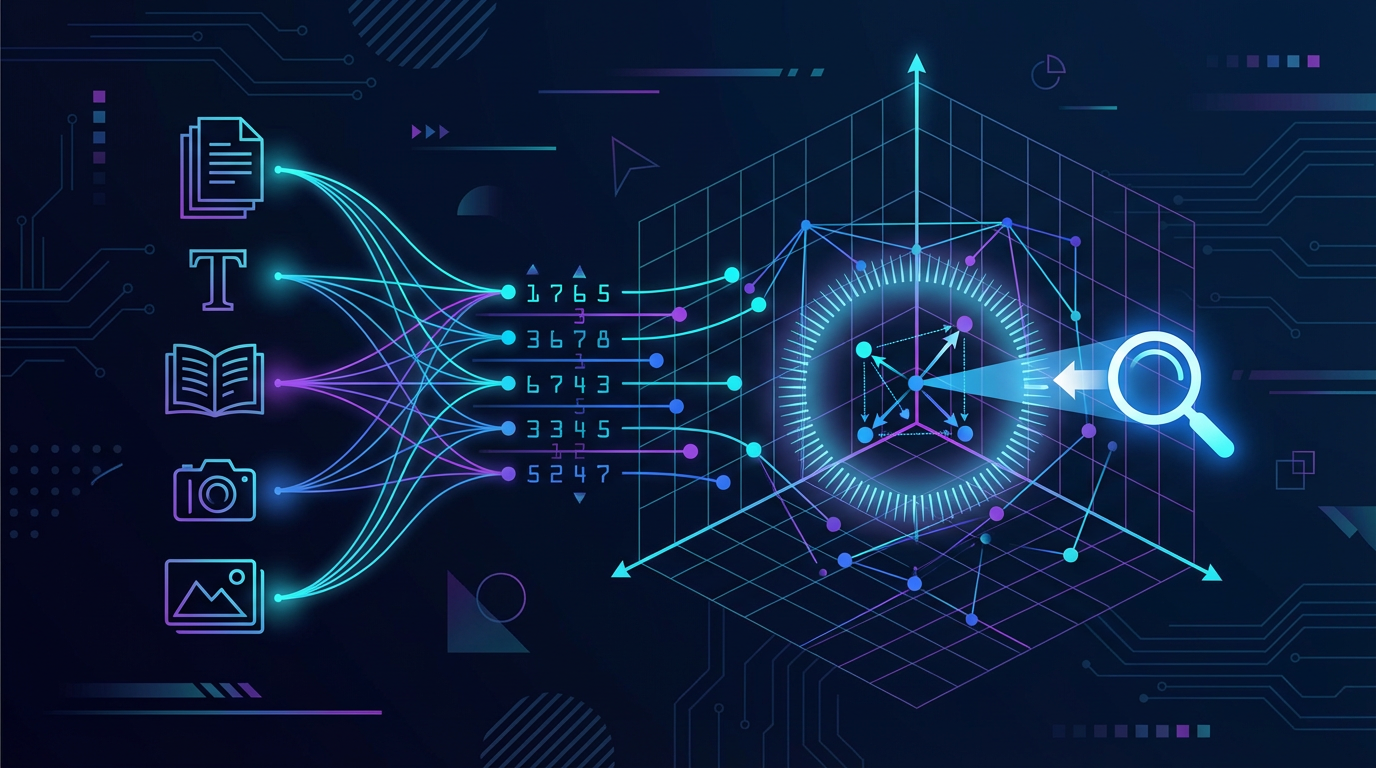

A vector database works differently at a foundational level. Instead of storing raw data, it stores embeddings — dense numerical representations of that data produced by machine learning models. A sentence gets transformed into a list of 384 to 1,536 numbers. An image becomes a 2,048-dimensional vector. A product description, a support ticket, a legal clause — all converted into coordinates in a high-dimensional mathematical space where similar things sit close together.

When you query a vector database, you're not asking "find this exact phrase." You're asking "find everything that means something similar to this." A support ticket about "laptop won't turn on" retrieves results near "power failure," "battery dead," and "device not booting" — even without a single shared word. That's semantic search, and it's what makes AI genuinely useful for enterprise knowledge retrieval.

What Embeddings Are (and Why They Matter)

Embeddings are the raw material that vector databases are built to store and search. They're produced by embedding models — a class of machine learning model whose sole job is to convert content into vector form.

When OpenAI's embedding model processes the phrase "quarterly revenue targets," it outputs a fixed-length array of numbers that captures the semantic meaning of that phrase. Run "Q3 financial goals" through the same model and you get a numerically similar array, because both phrases mean roughly the same thing. The distance between those two vectors in mathematical space is small. The distance between "quarterly revenue targets" and "employee wellness policy" is large.

This numerical representation of meaning is what enables:

- Semantic search — finding relevant content even when exact keywords don't match

- RAG pipelines — supplying language models with relevant context at query time

- Recommendation engines — matching users to products or content by semantic similarity

- AI agent memory — giving agents a persistent, searchable record of past interactions and knowledge

Without embeddings stored in a fast, scalable retrieval system, none of these capabilities work reliably at production scale.

Why Traditional Databases Can't Do This Job

The distinction matters because many teams try to bolt vector search onto existing infrastructure and hit walls immediately.

Traditional relational databases — Postgres, MySQL, SQL Server — are optimized for exact match queries on structured data. They have no native concept of "similarity." You can store a vector as a data type in some modern versions, but searching across millions of high-dimensional vectors with acceptable latency requires specialized indexing algorithms (HNSW, IVF, ScaNN) that traditional databases weren't built to support.

Enterprise data is roughly 80% unstructured — customer emails, support tickets, product images, contracts, meeting transcripts. Traditional databases force this information into simplified text fields, discarding the semantic content in the process. Vector databases are purpose-built to capture and retrieve that meaning at scale, handling similarity searches across billions of vectors in milliseconds.

How Vector Search Works in Practice

When a user submits a query to an AI system backed by a vector database, the following happens in sequence — typically in 10 to 50 milliseconds:

- Embed the query — The user's question is converted into a vector using the same embedding model that was used to index the knowledge base.

- Search the index — The vector database compares the query vector against stored vectors using an approximate nearest neighbor (ANN) algorithm. It doesn't scan every record; it navigates an index structure to zero in on the relevant region of the vector space.

- Apply filters — Hybrid search combines vector similarity with metadata filters: date ranges, document types, access permissions, categories.

- Re-rank results — Top matches are scored and ordered by relevance.

- Return context — The retrieved chunks are passed to the language model as context for generating a grounded, accurate response.

This is the retrieval step in every RAG architecture. The vector database is what makes retrieval fast, accurate, and scalable — and it's the component that most teams underestimate when they first start building.

Real Business Use Cases

Vector databases aren't abstract infrastructure. They power concrete, measurable outcomes across enterprise functions.

Enterprise Knowledge Search

Internal knowledge bases are the most immediate use case. Instead of keyword search across documentation (which fails when employees use different terminology than the document author), vector search retrieves the right policy, process, or answer based on semantic meaning. A new hire asking "what's the process for approving a vendor?" finds the right procurement documentation even if it's titled "Supplier Onboarding Framework."

Customer Support and Chatbots

Customer-facing AI assistants need to surface accurate, specific answers from product documentation, FAQs, and policy documents — in real time. Vector databases supply the retrieval layer that keeps those answers grounded in your actual content rather than generic model outputs. When product information changes, updating the vector database is a documentation update, not a model retrain.

Recommendation Engines

Streaming platforms, e-commerce sites, and content platforms use vector databases to match user behavior and preferences (encoded as embeddings) against available items (also embedded) to surface relevant recommendations. This semantic matching outperforms traditional collaborative filtering because it captures contextual similarity, not just historical co-occurrence.

AI Agent Memory

This is the use case that's accelerating fastest in 2026. As covered in the AI agent infrastructure breakdown, agents need persistent, searchable memory across sessions. Vector databases give agents a long-term semantic memory — the ability to retrieve relevant past interactions, decisions, and context without loading an entire history into the model's context window. Without vector stores, production-grade AI agents simply don't function at scale.

Fraud and Anomaly Detection

Enterprises embed operational signals — logs, transactions, support tickets, sensor data — and use vector similarity to detect when new signals deviate semantically from established patterns. This surfaces subtle anomalies that keyword-based rule systems would miss entirely.

The Leading Vector Databases in 2026

The ecosystem has matured significantly. The right choice depends on your scale, technical team, and infrastructure requirements.

Pinecone is the fully managed option — minimal operational overhead, strong performance, purpose-built for production RAG pipelines. Best for teams that want to move fast without managing infrastructure.

Milvus is the open-source leader for enterprise scale, now a Linux Foundation project. It separates storage and compute for independent scaling and handles billion-vector datasets. Best for organizations with dedicated engineering resources and massive embedding collections.

Weaviate offers strong multimodal capabilities and direct integrations with embedding providers (OpenAI, Cohere, Hugging Face). Best for teams building applications that combine text and image retrieval.

Qdrant is built in Rust for consistently low latency under heavy load, with excellent filtered vector search. Best for applications that combine similarity matching with complex metadata constraints — personalization, access-controlled document search.

Chroma is the developer-friendly, open-source option with deep LangChain integration. Best for prototyping and proof-of-concept work before committing to production infrastructure.

A growing trend worth noting: major data platforms — Snowflake, BigQuery, Databricks — are embedding vector search directly into their warehouses. For organizations already running on these platforms, this integration path reduces architectural complexity considerably.

What to Consider Before You Choose

A few factors determine which direction to go:

Scale. How many vectors will you store — millions or billions? The answer changes the shortlist significantly. Chroma handles small-to-medium workloads well; Milvus is built for the high end.

Query latency requirements. Customer-facing applications need sub-100ms responses. Qdrant and Pinecone consistently deliver here. Internal tools can tolerate more.

Multimodal needs. If your use case involves images, audio, or video alongside text, look at Weaviate or multimodal embedding pipelines before committing to a text-only solution.

Managed vs. self-hosted. Managed services (Pinecone) trade cost for operational simplicity. Open-source (Milvus, Qdrant, Weaviate) trade engineering burden for control, customization, and data sovereignty.

Compliance. In regulated industries, data sovereignty often dictates self-hosted infrastructure. Qdrant and Milvus both support private deployment with enterprise access controls.

The Connection to Your Broader AI Stack

Vector databases don't stand alone. They sit at the retrieval layer of a larger AI agent infrastructure, serving as the persistent knowledge store that connects raw enterprise data to language models and agents.

The flow looks like this: your documents and data are ingested, chunked, and passed through an embedding model. The resulting vectors are stored and indexed in your vector database. When a user or agent queries the system, the vector database retrieves the most relevant chunks, which are passed as context to the language model. The model generates a grounded response.

This is why the choice between fine-tuning and RAG ultimately leads back to vector databases: RAG-based architectures live or die on retrieval quality, and retrieval quality is a vector infrastructure problem. Optimizing your embedding model selection, your chunking strategy, and your indexing configuration will do more for your AI output quality than switching language models in most cases.

The Bottom Line

Vector databases are the infrastructure layer that makes the difference between AI that searches your knowledge base and AI that actually understands it. They're the reason semantic search returns the right answer even when the user's words don't match the document's words, and they're the memory system that allows AI agents to operate persistently across sessions at enterprise scale.

If you're building a RAG pipeline, deploying a knowledge-base chatbot, or planning any AI agent infrastructure, a vector database isn't optional. The question isn't whether you need one — it's which one fits your scale, your team, and your compliance requirements. Start with a managed solution to validate the use case, then evaluate self-hosted options as your embedding volumes and operational requirements grow.

Frequently Asked Questions

What's the difference between a vector database and a regular database? Traditional databases store structured data and support exact-match queries. Vector databases store high-dimensional numerical representations (embeddings) of unstructured data and support similarity search — finding records that are semantically close to a query, not just textually identical.

Do I need a vector database if I'm using RAG? Yes, in almost every production scenario. RAG requires fast, accurate retrieval of relevant content at query time. A vector database is the retrieval engine that makes this possible at scale. Without one, retrieval becomes a bottleneck in both speed and accuracy.

Can I add vector search to my existing database? Some traditional databases now support vector extensions — pgvector for PostgreSQL is the most common. This works well for small-to-medium datasets. For production-scale AI workloads with millions to billions of vectors and low-latency requirements, a purpose-built vector database typically outperforms extensions on general-purpose systems.

How do embeddings get created? An embedding model converts your content — text, images, audio — into a fixed-length numerical vector. Common embedding models include OpenAI's text-embedding-3 series, Cohere's Embed models, and open-source options like Sentence Transformers. The key constraint: the same embedding model must be used for both indexing your data and embedding queries at search time.

What is the cost of running a vector database? Managed services like Pinecone charge based on vectors stored and queries executed. Open-source options like Milvus or Qdrant are free but require infrastructure and engineering investment. For most teams starting out, managed services are cost-effective until vector counts exceed tens of millions, at which point self-hosted becomes more economical.