What Is AI Sovereignty — and Why 93% of Executives Say It's Now a Business Priority

Most businesses treating AI sovereignty as a compliance checkbox are misreading what it actually is. It's not a data residency form you fill out. It's a strategic question: who controls your AI stack — and what happens to your business if the answer is "someone else"?

According to IBM's 2026 Institute for Business Value research, 93% of executives now say factoring AI sovereignty into business strategy is a must. That number didn't appear out of nowhere. It's the result of geopolitical fractures, tightening regulation, and the quiet realization that most organizations have handed over enormous leverage to a handful of AI providers they can't audit, override, or exit without major disruption.

What AI Sovereignty Actually Means

IBM defines AI sovereignty as an organization's capacity to control its artificial intelligence technology stack — the infrastructure, the data, the models, and the operations. That's the clean definition. In practice, it covers four things that most businesses have not fully worked through.

Data sovereignty — where your data lives, who can access it, how it flows through AI pipelines, and whether it's subject to foreign jurisdiction. The U.S. CLOUD Act, for example, allows American authorities to compel disclosure of data held by U.S. providers regardless of where that data is physically stored. If you're using a U.S.-headquartered cloud AI service and your data is sensitive, this is not theoretical.

Model sovereignty — who owns and controls the models processing your data. When you send inputs to a third-party LLM API, you typically have limited visibility into how outputs are generated, whether your data influences future training, or what happens to your queries in the provider's pipeline.

Operational sovereignty — your ability to maintain continuity, audit operations, and modify configurations independently. If your AI provider goes down, changes pricing, or gets acquired, can you keep operating? Most organizations today cannot.

Regulatory sovereignty — your capacity to demonstrate compliance to regulators on demand. With the EU AI Act now entering enforcement and data localization requirements expanding globally, "we use a third-party provider and trust them" is not a compliant governance posture.

Why This Became Urgent in 2026

AI sovereignty wasn't a boardroom conversation three years ago. It became one for reasons that converged simultaneously.

Regulatory pressure accelerated faster than most organizations anticipated. The EU AI Act becomes fully applicable August 2, 2026, with enforceable obligations for high-risk AI systems. GDPR fines for AI-related violations reached €2.3 billion in 2025 alone — a 38% year-over-year increase. Seventy-one percent of organizations cite cross-border data transfer compliance as their top regulatory challenge. Regulators are no longer asking politely.

Geopolitical fractures made third-party dependency visible. The U.S.-China semiconductor rivalry, export controls on AI chips, and Europe's deliberate push toward digital sovereignty have made it impossible to ignore how concentrated AI capability is. Three U.S.-based hyperscalers now account for roughly 70% of European cloud demand. In AI infrastructure specifically, the U.S. and China together control over 90% of global data center capacity. For organizations in regulated industries or government-adjacent sectors, dependency on foreign-controlled infrastructure is now a governance risk — not just a vendor management question.

The cost of getting it wrong became measurable. A recent CIO analysis found that while 95% of enterprise leaders plan to build their own AI and data platforms, only 13% are currently on track. Those who have established sovereign AI foundations are realizing up to five times the ROI of their peers. That gap has the attention of CFOs.

The Four Components of a Sovereign AI Strategy

1. Know Where Your Data Is and Who Can Reach It

This sounds obvious. It isn't. Most organizations operate across legacy systems, multi-cloud environments, and siloed data estates where nobody has a complete map of where data flows once it enters an AI pipeline.

A working sovereignty strategy starts with a data audit: what data is being processed by which AI systems, where it's stored at rest and in transit, and what legal jurisdictions apply. Encryption and key management matter here — hold-your-own-key architectures give organizations control over encrypted data that even a cloud provider can't access, even under legal compulsion.

2. Evaluate Your Model Dependency Risk

If your core AI operations run entirely on API calls to a single third-party foundation model, you have a concentration risk that belongs on your risk register. This doesn't mean you need to train your own models — that's impractical for most organizations. It means understanding your exposure and having a plan.

Open-source AI models have made this more achievable. Fine-tuned open-weight models running on your own infrastructure give you full control over model behavior, data handling, and operational continuity. For organizations in healthcare, legal, or finance, this is increasingly the architecture regulators expect.

3. Build for Auditability

Regulators increasingly expect organizations to demonstrate compliance, not merely assert it. For AI systems, this means maintaining detailed logs of training data provenance, inference decisions, and the ability to explain outputs on demand.

The EU AI Act's requirements for high-risk systems include documentation of training data, risk assessments, and human oversight mechanisms. Organizations that have embedded auditability into their AI architecture from the start will have a significant compliance advantage over those retrofitting it later.

4. Adopt a Hybrid Architecture

Microsoft, IBM, and a growing number of enterprise AI vendors are now explicitly designing for sovereign deployment — meaning AI infrastructure that can operate in disconnected or fully on-premises environments without sacrificing capability. Microsoft's Sovereign Cloud, released in February 2026, allows organizations to run mission-critical AI workloads with no cloud connectivity inside their own sovereign operational boundary.

A hybrid approach — matching architecture to the sensitivity and regulatory profile of each workload — is what most organizations should be building toward. Not everything needs to be locked down. But your highest-sensitivity workloads almost certainly shouldn't be running on shared cloud infrastructure governed by another country's legal framework.

What This Means for Industries Most Affected

Financial services — Both the EU AI Act and U.S. SEC AI examinations create enforceable obligations around explainability and auditability. AI regulation is no longer coming — it's active in this sector.

Healthcare — HIPAA compliance in AI requires knowing exactly where protected health information goes when it enters a model pipeline. Most generic cloud AI services cannot provide this assurance.

Public sector and critical infrastructure — Several governments are now explicitly restricting the use of foreign-controlled AI in defense-adjacent or critical national infrastructure contexts. The UK's ICO and Ofcom recently issued formal demands to xAI regarding its Grok model, signaling that regulatory scrutiny of AI providers is intensifying.

Getting Started: A 120-Day Framework

Organizations serious about AI sovereignty don't need to overhaul everything at once. A structured 120-day approach gives most enterprises a workable path.

- Days 1–30: Audit and map — Document all AI systems in use, the data flowing through them, and the jurisdictions that apply. Identify your highest-risk workloads.

- Days 30–60: Governance architecture — Define data classification standards, access controls, and documentation requirements for AI systems. Assign clear ownership.

- Days 60–90: Infrastructure decisions — Evaluate hybrid deployment options for high-sensitivity workloads. Assess open-source model alternatives where third-party model dependency creates unacceptable risk.

- Days 90–120: Operationalize — Integrate model governance, vector indexing, inference pipelines, and audit capabilities within your governed perimeter.

The Bottom Line

AI sovereignty isn't about building your own chips or training your own foundation models from scratch. It's about maintaining meaningful control over the AI systems that are increasingly making or influencing decisions in your business — and being able to prove that control to regulators, customers, and partners who will ask.

The organizations that treat this as a strategic capability rather than a compliance exercise are the ones realizing five times the ROI of their peers. The window to build these foundations before enforcement intensifies is now.

Internal linking opportunities: AI Regulation 2026 · AI Agent Security · Open-Source AI for Business · Enterprise AI Agents

Schema recommendation: Article schema. Consider FAQ schema for "What is AI sovereignty?", "Is AI sovereignty the same as data sovereignty?", and "Which industries are most affected?"

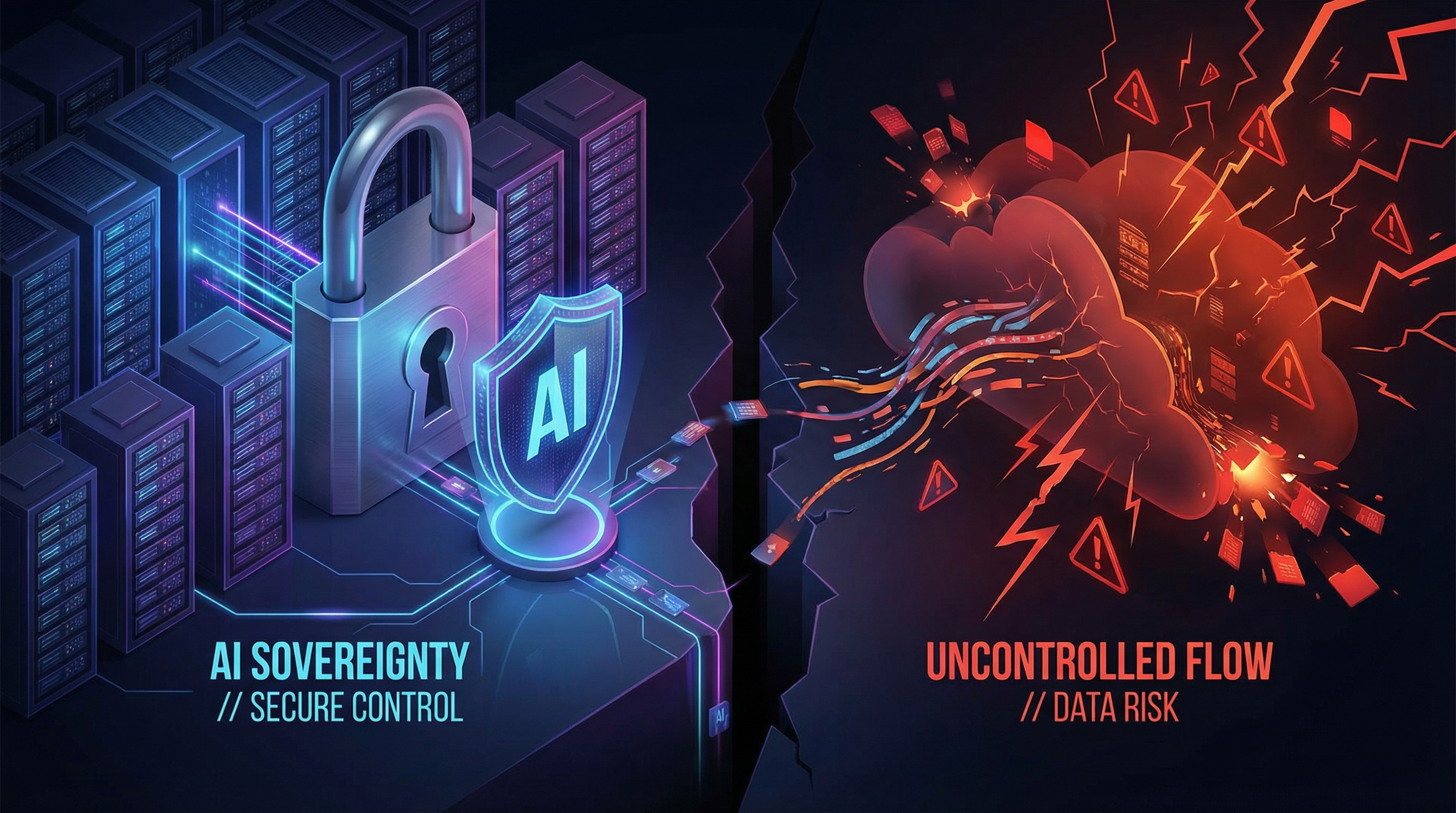

Suggested featured image concept: Dark split-screen — left side shows a locked padlock over a server/data center with a national flag motif; right side shows data flowing freely into an uncontrolled cloud with warning indicators. Central label: "Who Controls Your AI?"